Datasets for localization and human robot interaction

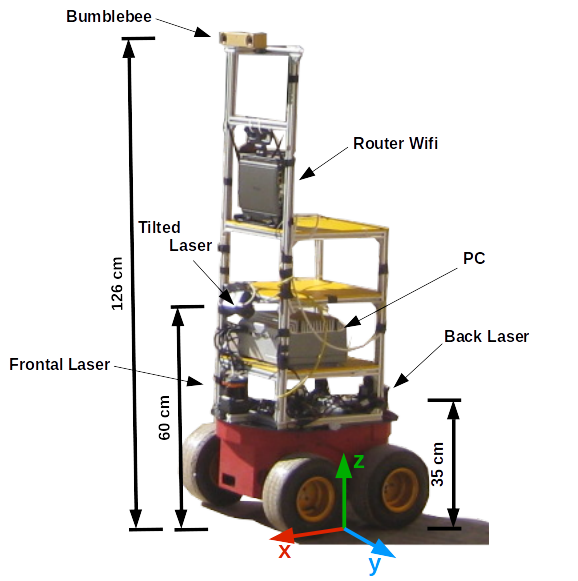

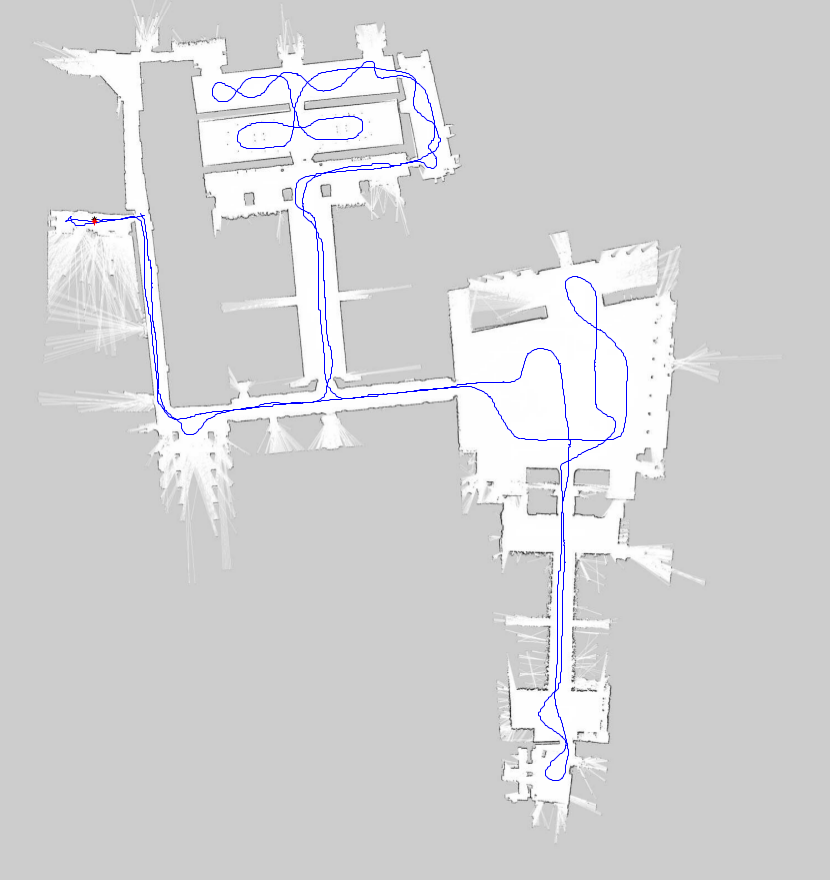

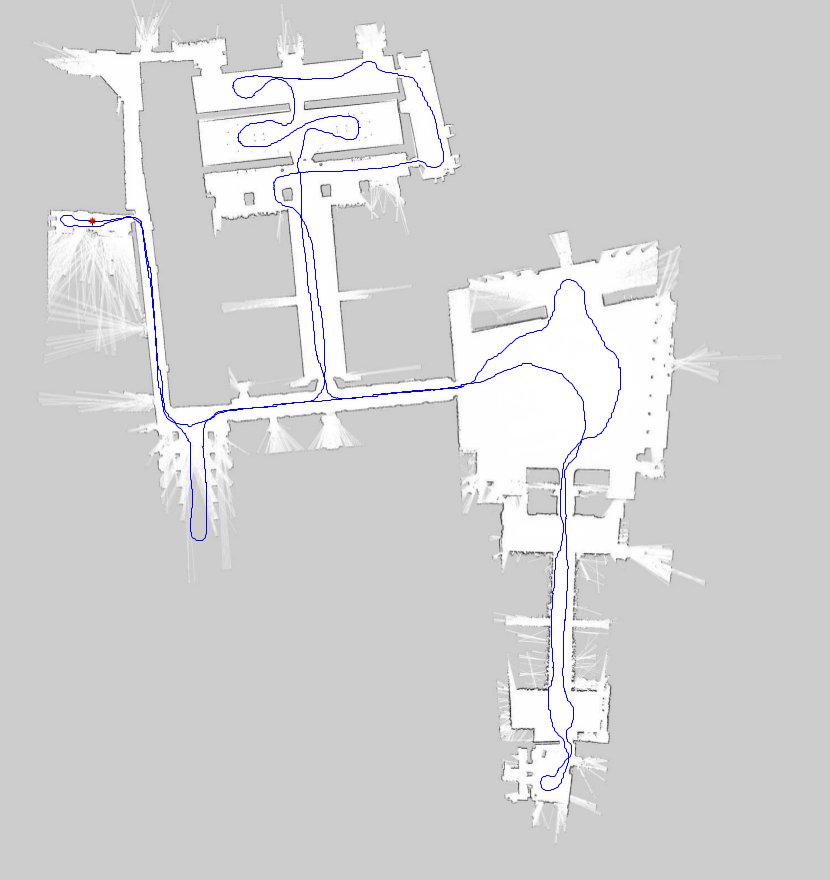

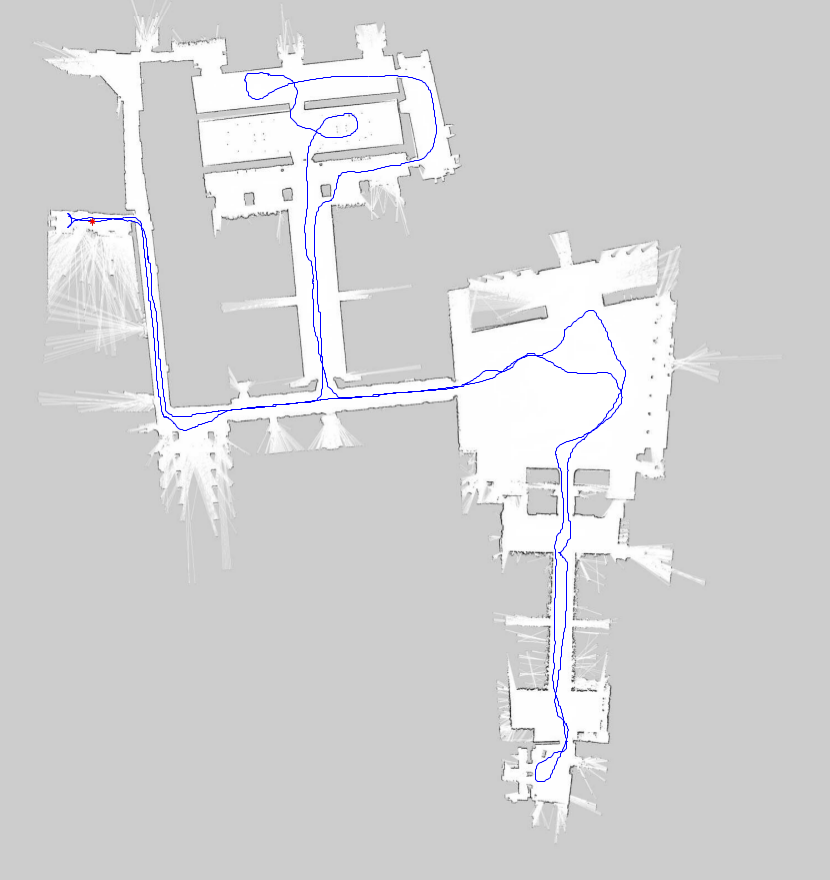

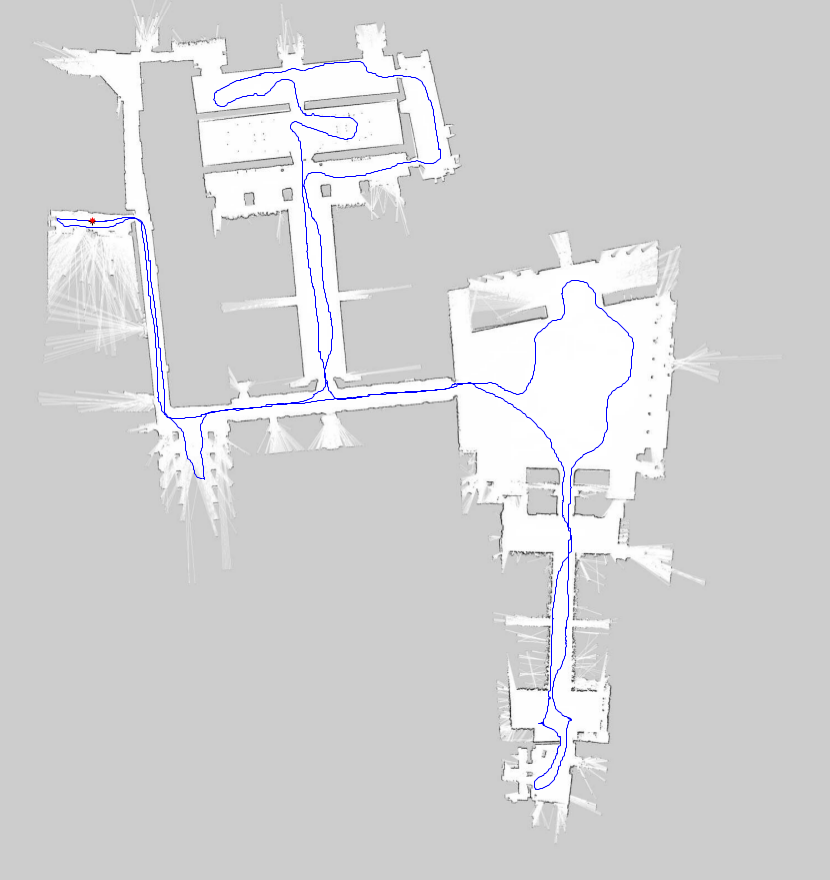

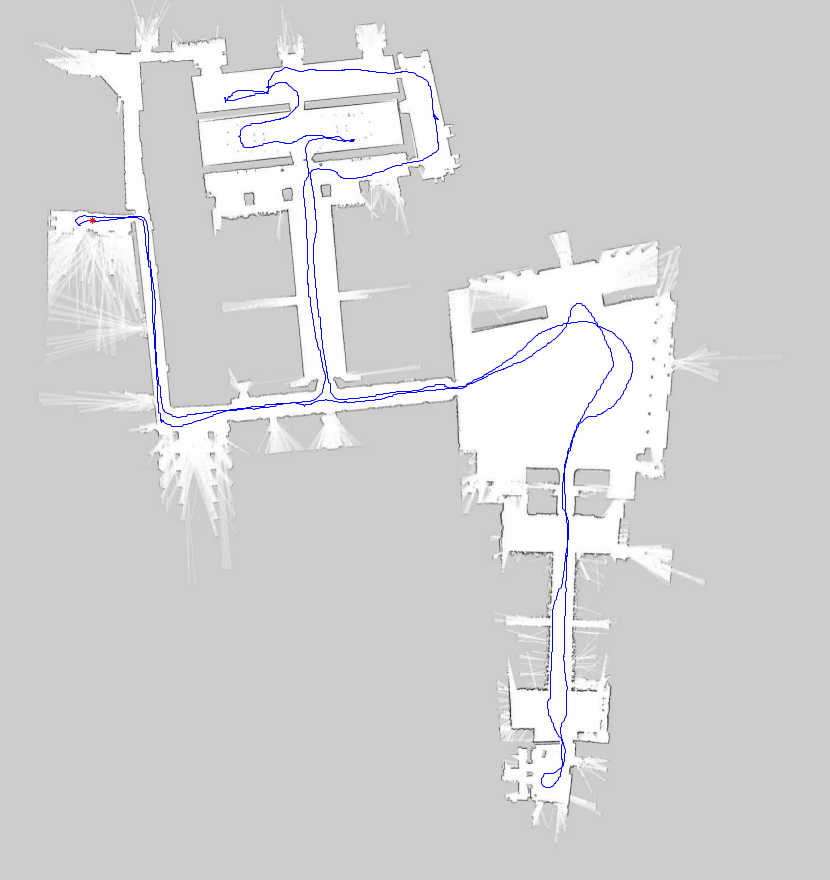

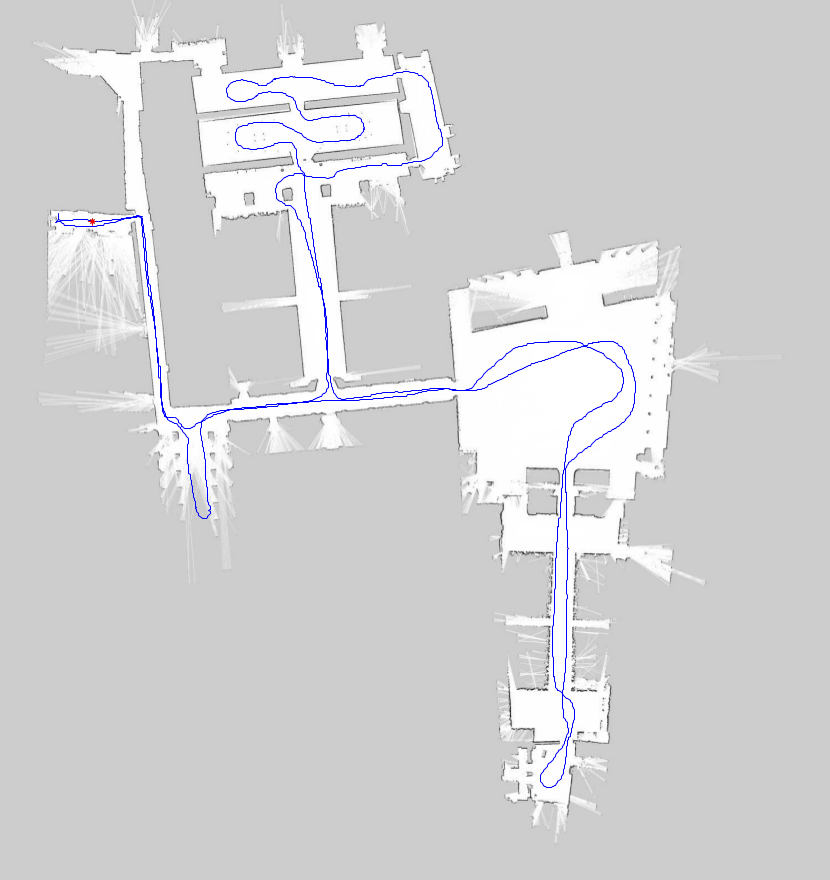

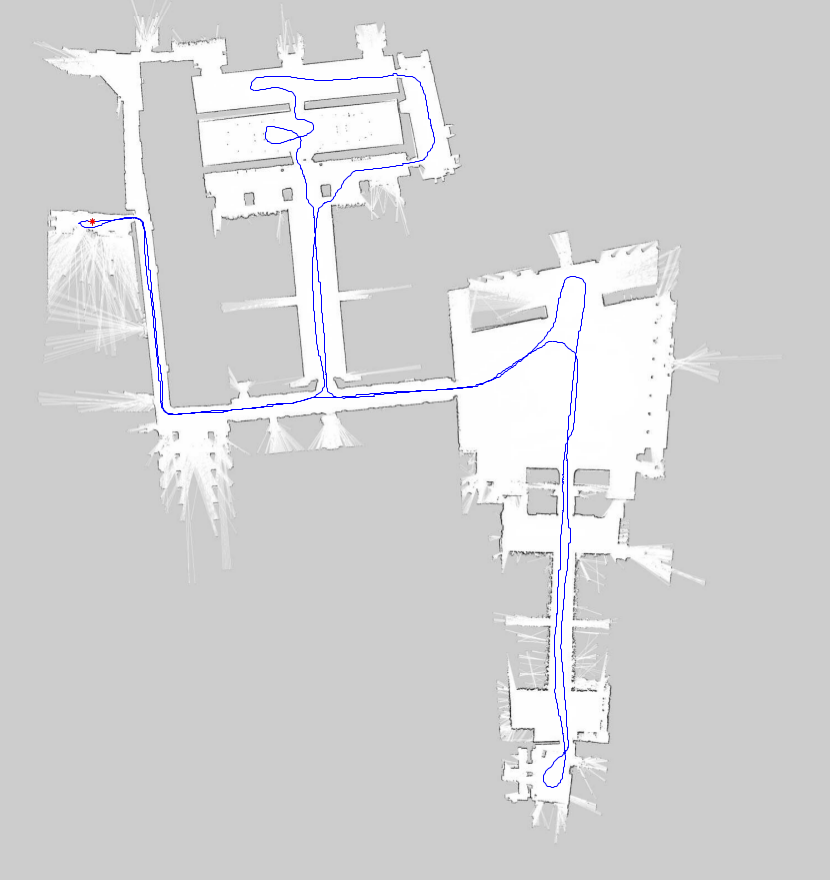

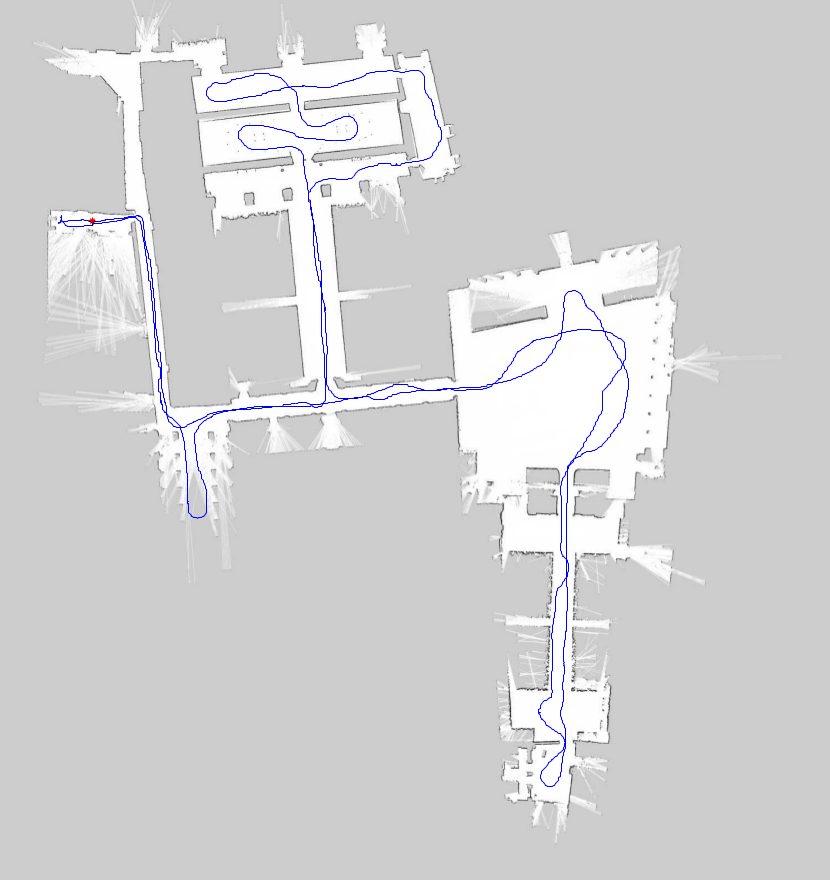

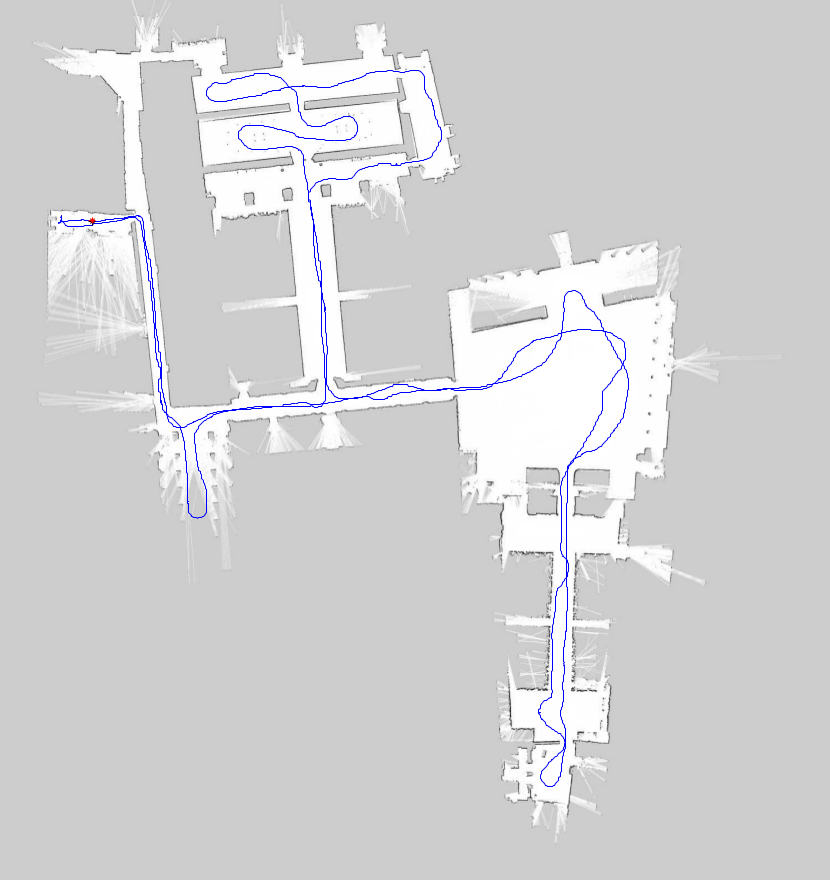

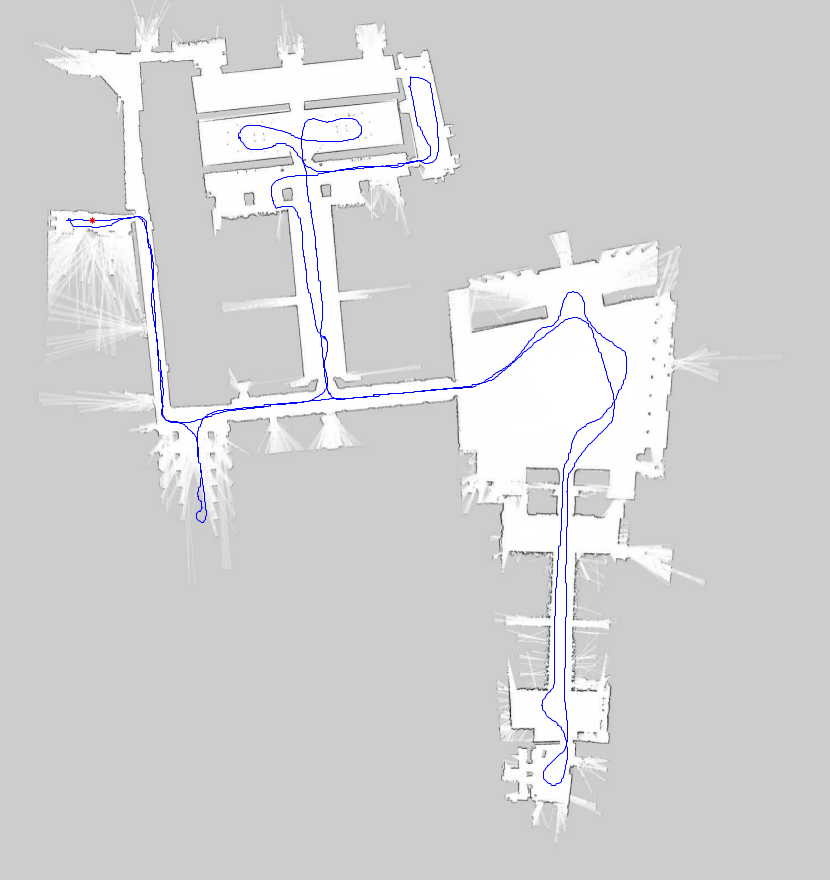

We present a novel set of data for the evaluation of visual place recognition in both indoors and outdoors environment in addition to sensor information to evaluate human-robot interactions in crowded areas. The datasets were recorded in the Royal Alcázar of Seville (Spain). We recorded a large set of images sequences from a stereo camera and scan measurements from three laser mounted on a moving robot.

The datasets are timestamped and stored by means of the well-known Robot Operating System (ROS) log functionality. The robot traveled more than one kilometer in each experiment, and every trial was performed at different time of the day so we could capture the evolution of lighting conditions over the images. The tourist attendance also depends on the hour, providing datasets with a lot of examples to model into a social-way the different places such as corridors, gates, queues, groups of people, etc.

A data paper on these datasets is available here