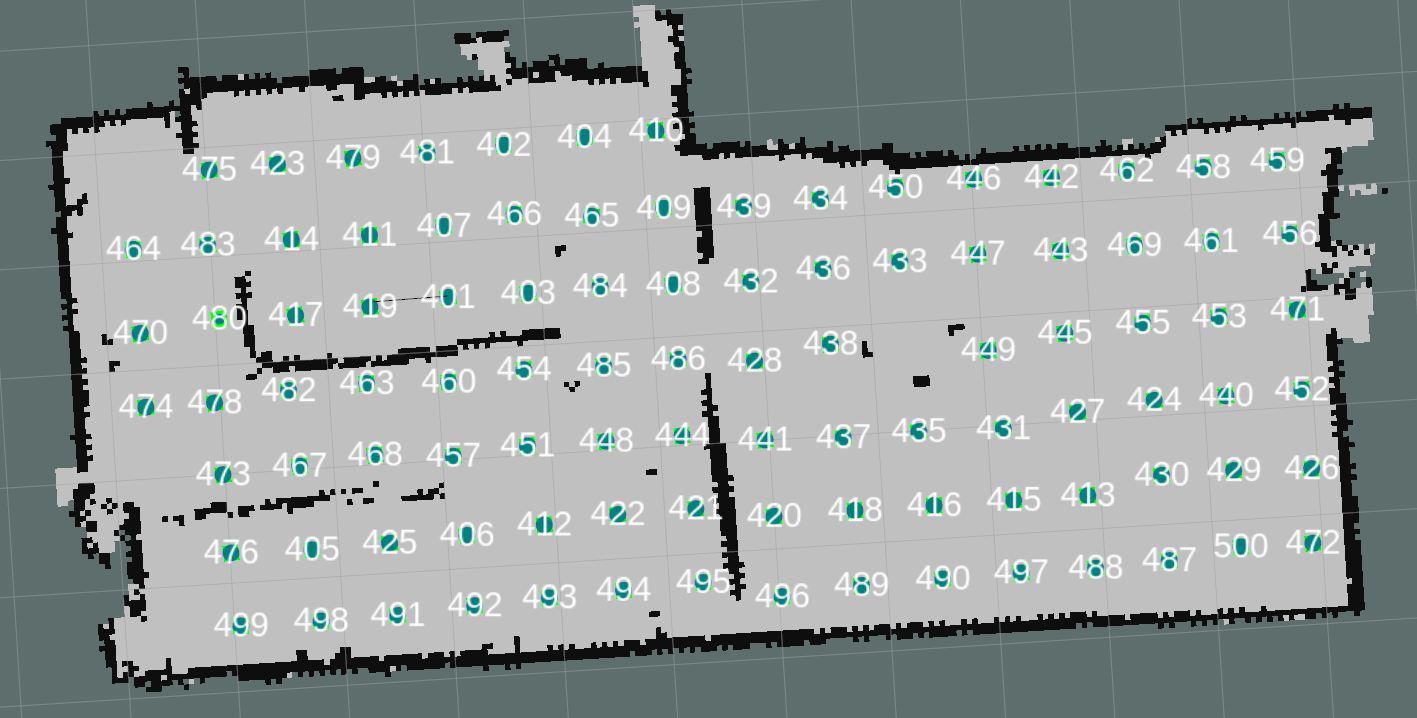

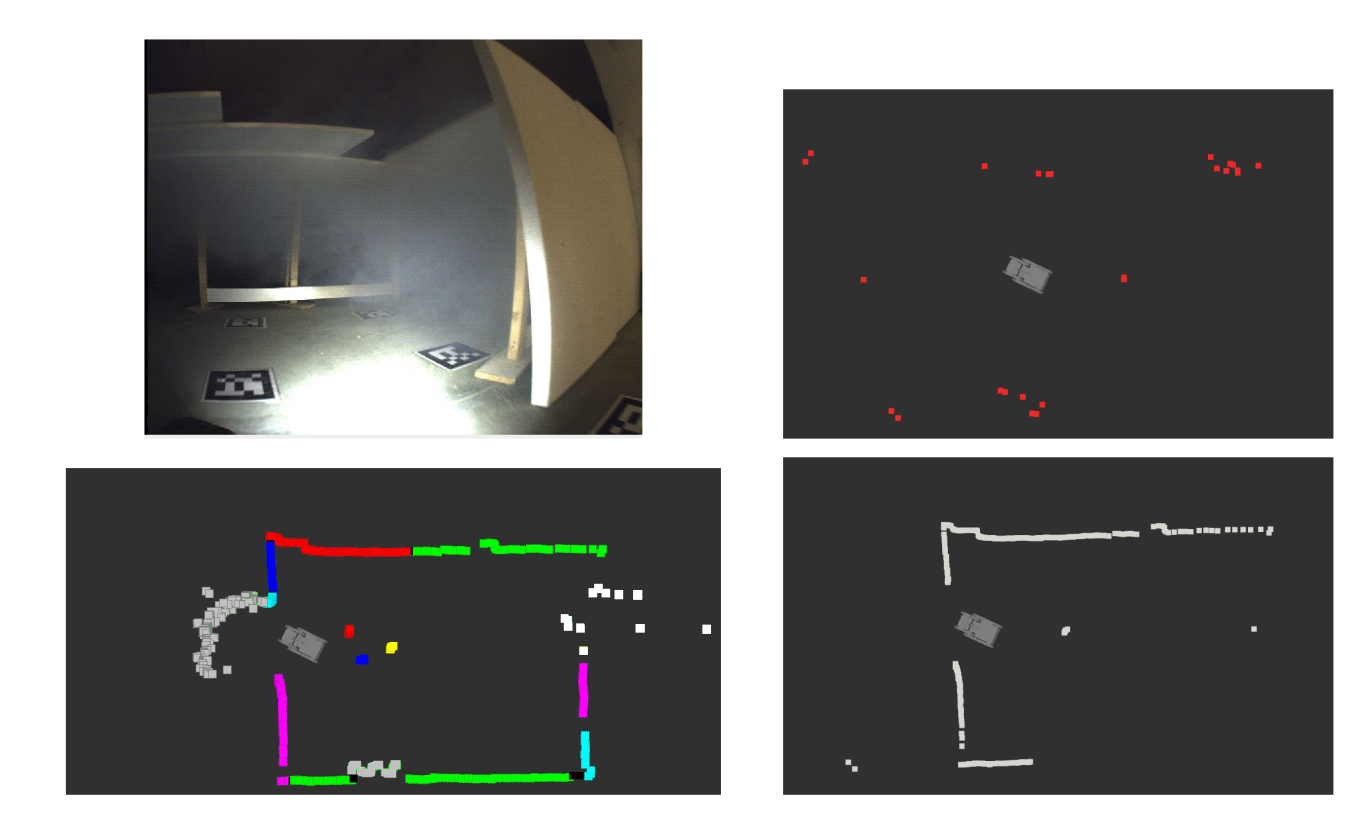

Datasets of a indoor scenario with artificial smoke

We present a dataset obtained in an underground location with reduced-visibility conditions (artificial smoke). The set consists of logs obtained with differents levels of smoke. In addition, we provide the users with a partial ground-truth and baselines of the localization of the platforms, which can be used for testing localization and SLAM algorithms.

The datasets are timestamped and stored by means of the well-known Robot Operating System (ROS) bag package. The contents of the different bags is detailed in the contents section. Unfortunately, the localization of the platform in such environment is a harsh challenge as it is a GPS-denied environment. We provide a ground truth reference for comparing the results of different methods.

Copyright

All datasets and benchmarks on this page are copyright by us and published under the Creative Commons Attribution-NonCommercial-ShareAlike 3.0 License. This means that you must attribute the work in the manner specified by the authors, you may not use this work for commercial purposes and if you alter, transform, or build upon this work, you may distribute the resulting work only under the same license.

Citation

Please cite our paper on radar data fusion if you use the dataset for your research. It would be more than welcome!!

Plain text

David Alejo, Rafael Rey, Jose Antonio Cobano, Fernando Caballero, and Luis Merino. “Data Fusion of RADAR and LIDAR for Robot Localization Under Low-Visibility Conditions in Structured Environments”, In D. Tardioli, V. Matellan, G. Heredia, M.F. Silva, and L. Marques, editors, ROBOT2022: Fifth Iberian Robotics Conference, Advances in Intelligent Systems and Computing, pp. 301–313, Springer International Publishing, 2023.

Acknowledgements

This work is partially supported by Programa Operativo FEDER Andalucia 2014-2020, Consejeria de Economía, Conocimiento y Universidades (DeepBot, PY20_00817) and the Insertion project, funded by MCIN with grant number PID2021-127648OB-C31.